Finding the shortest path, to AI-native

I have sat in enough boardrooms, product reviews, and operations calls to recognize a familiar pattern. The language changes faster than the system does.

A company says it is becoming AI-native. A team adds a model to an old workflow. A few internal demos go well. The roadmap gets rewritten in the new vocabulary. But underneath, most of the real decisions are still being made the old way.

That is why I keep coming back to a phrase I have heard in engineering and logistics for years: finding the shortest path.

On the surface, it sounds like an optimization problem. But after building across startups, enterprise systems, and operationally messy environments, I think it is really a strategic question. Not just how to get somewhere faster, but how to remove everything false between intention and reality.

That is what makes the current conversation around AI-native so interesting.

A lot of companies talk about becoming AI-native as if it were a branding milestone. A slide. A product feature. A line inserted into the CEO letter. But if we use the term seriously, it means something much more demanding. It means AI is not sitting on top of the business as a helper. It means the business itself has been redesigned so that AI is part of the operating substrate.

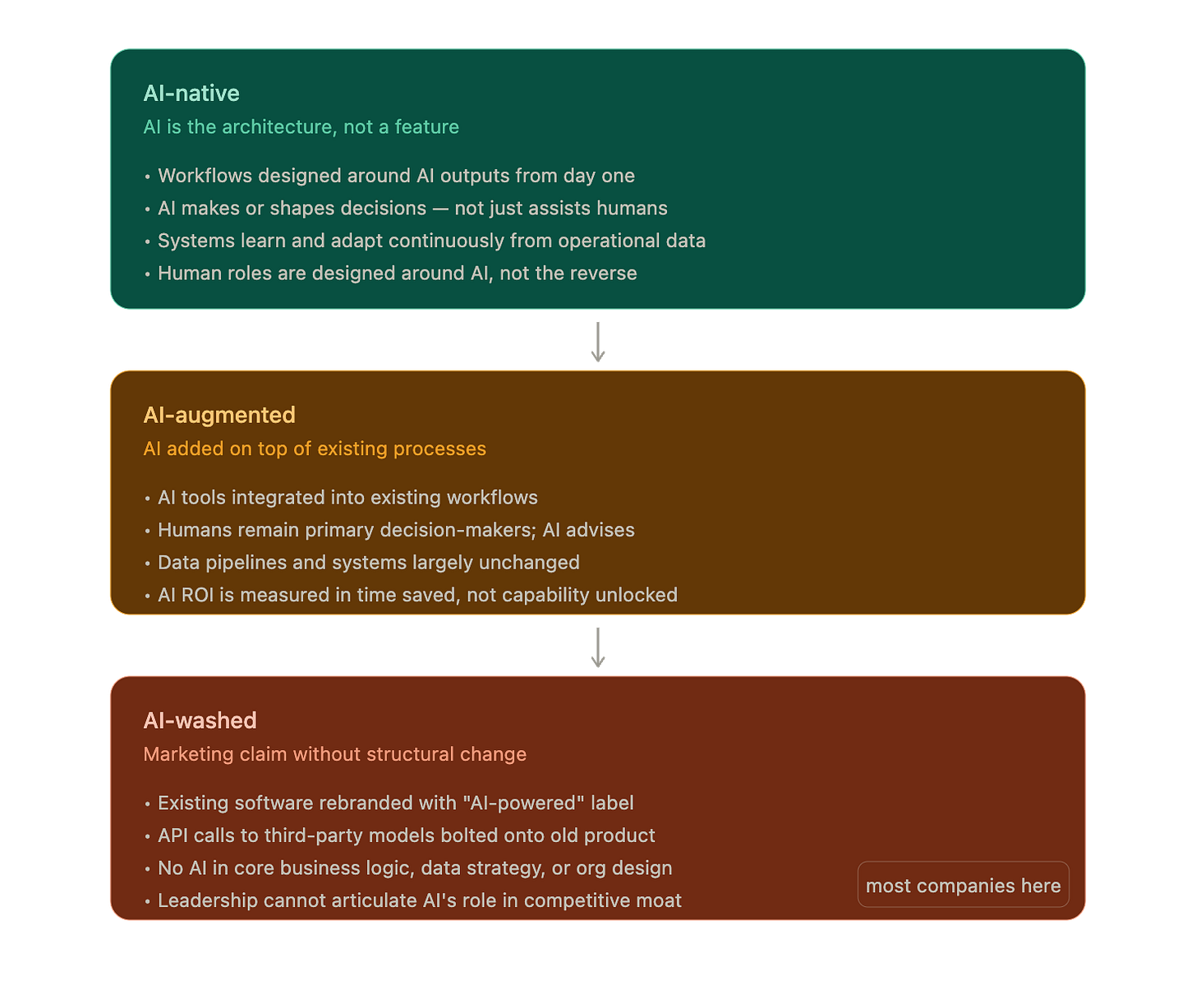

That is the difference between AI-washed, AI-augmented, and AI-native.

AI-washed is when an old product gets a new label. AI-augmented is when AI genuinely improves an existing workflow, but the workflow itself remains mostly human-designed and human-executed. AI-native is when the system, the data model, the decision logic, and even the human roles were built around AI from the beginning, or have been restructured deeply enough that AI is now on the critical path.

I made this mistake myself in the early days of building my first cross-border, end-to-end logistics platform. In 2010, as I built a system spanning order management through last-mile operations, I mistook better tools for deeper transformation. They are not the same thing. The clearest way I can describe it now is this: AI-native is not about having AI in the building. It is about letting AI change the floor plan.

The first analogy: the organizational shortest path

Many organizations say they want the shortest path to AI-native. What they usually mean is the shortest path to sounding AI-native.

That path is familiar. Add a chatbot to the website. Plug a model into a workflow. Ask teams to use copilots. Build a few demos. Rename the roadmap. Then speak as if the transformation has already happened.

But that is not the shortest path. That is the scenic route disguised as progress.

The real shortest path is more uncomfortable because it forces an organization to confront the truth of how decisions are actually made.

If every important output still waits for a human to review, approve, correct, route, and execute, then AI is not yet native. It may be useful. It may save time. It may even create real value. But it is still orbiting the core rather than sitting inside it.

This is where many leadership teams get trapped. They think AI maturity is about how many tools the company has adopted. In reality, the harder question is whether the company is willing to redesign operating authority, feedback loops, and role structure around what AI can now do.

That requires clarity across five dimensions. The first is architecture. Core workflows, data schemas, APIs, and latency assumptions have to be designed with AI inference in mind, not retrofitted later. The second is decision authority. AI cannot only advise; it has to close a meaningful share of decisions end to end within policy bounds. The third is data infrastructure. Operational data has to flow back into model improvement, not only into dashboards and monthly reporting. The fourth is organizational design. Human roles need to shift toward policy-setting, exception handling, and audit rather than routine execution. The fifth is moat. If AI were removed, the company should not merely slow down a little; it should lose a real part of its competitive advantage.

That last point matters more than most people admit.

If removing AI leaves you with basically the same business, just with more manual effort, then you are probably not AI-native. You are AI-augmented. There is nothing wrong with that. In fact, many good companies should honestly describe themselves that way today. But the distinction matters because strategy becomes distorted when vocabulary becomes dishonest.

A serious company should be able to answer a few objective questions.

Is AI on the critical path of major transactions? What percentage of decisions are actually closed by AI? Does more usage make the system better through a real data flywheel? Is headcount leverage improving because of AI, or are humans still scaling linearly with volume?

These are not marketing questions. They are operating questions.

And this is why I keep coming back to the idea of the shortest path. The shortest path is rarely the one with the best narrative. It is the one that removes ceremonial work. It is the one that asks, with some honesty, where the decision really happens.

The second analogy: the shortest path in logistics

This becomes much easier to understand when you stop talking in abstractions and move into operations.

Take a hypothetical last-mile delivery company in Singapore. Not a normal motorbike or van fleet. Imagine a company whose riders use the MRT as part of the delivery network for dense urban movement.

Now the phrase finding the shortest path becomes literal.

But in this operating model, the shortest path is not simply the shortest distance on a map.

It is the shortest executable path through a constrained system.

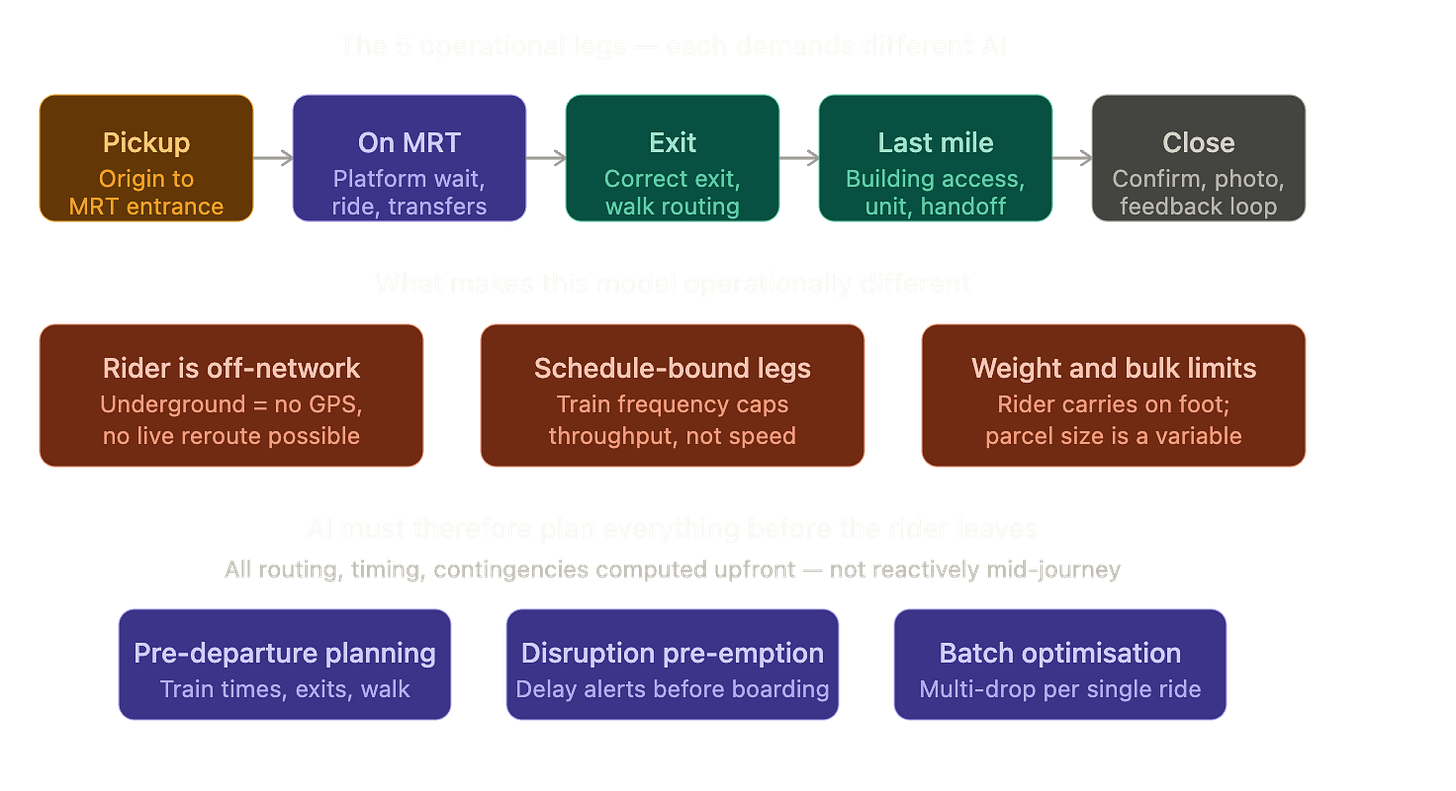

A rider starts at pickup, enters the MRT network, goes underground, loses GPS reliability, emerges at the destination station, exits onto the correct street, navigates the final walking path, accesses the building, and completes handoff. The route is shaped by train schedules, transfers, station geometry, exit placement, walking distance, parcel size, accessibility, and the fact that once the rider is underground, live rerouting becomes much harder.

That changes what an AI-native last-mile platform would have to be.

A normal AI-augmented platform might do something modest but helpful. It could suggest which station to use. It could summarize delivery notes. It could recommend an exit to the dispatcher. But the human operator would still coordinate the job, review the recommendation, message the rider, and absorb the failure when the route breaks.

An AI-native platform would look different.

Before a rider leaves the pickup point, the system would already have decided the full mission plan within policy limits: which MRT leg to take, which exit to use, which street the exit faces, which landmarks matter, whether lift access is available, whether the parcel is suitable for foot-carry, whether station congestion or exit closure changes the recommendation, and whether multiple drops can be batched into a single ride without breaking SLA.

The rider would not be waiting for human dispatch judgment as the default path. Human operators would step in only for exceptions: an inaccessible building, a parcel too bulky to carry, a station disruption, or a customer instruction that falls outside policy.

That is where the technology design and the operational design meet. In an AI-augmented version of the company, dispatch still belongs mostly to humans. The AI suggests a route, but a dispatcher approves it. Transit planning remains fairly static. Exit intelligence is limited to basic station or coordinate lookup. Failed deliveries are reviewed manually, and the operations team still manages most delivery decisions directly.

In an AI-native version, that same flow changes character. The AI assigns the route and the exit autonomously within policy. Pre-departure planning is optimized around train timings, transfer risk, and walkability. Exit intelligence becomes much richer, incorporating street-facing direction, nearby landmarks, lift access, and real-time overrides. Delivery outcomes continuously improve routing logic and operating policy. And the operations team shifts away from managing every decision toward managing policies, exceptions, and system performance.

That is the real threshold.

If the AI only helps a dispatcher work faster, the company is more intelligent than before, but not yet AI-native. If the AI becomes the dispatch layer, the planning layer, and the learning layer, with humans moving into supervisory roles, then the claim becomes much more credible.

Where MCP fits, and where it does not

This is also why I have been thinking about one of my recent open-source projects, the SG MRT Exits MCP Server.

The project is useful because it exposes structured exit-level information to AI agents. Instead of leaving the model to reason from a raw map pin, the system can answer questions like which exit is nearest, what the exit label is, what street it faces, and what landmarks are nearby. For any agent trying to reason about dense urban movement in Singapore, that is a meaningful improvement in context quality.

But building a good MCP server, even a full-fledged one with multiple tools, does not automatically make the surrounding business AI-native.

It makes the AI better informed.

That is important, but it is not the same thing.

An MCP server is a structured interface between an agent and the world. It is a tool layer. Whether the broader system is AI-native depends on what sits above and below that layer.

Above it, you need an AI agent or decision engine that actually uses that context to make consequential decisions on the critical path.

Below it, you need feedback, proprietary data accumulation, operational integration, and organizational redesign so the outputs are not just interesting answers but executed actions.

In other words, MCP is necessary in many AI systems, but it is not sufficient for AI-native status.

A company could build excellent MCP servers and still remain AI-augmented if humans continue to make the real decisions. On the other hand, the same MCP foundation can become part of an AI-native architecture if it feeds an autonomous decision loop with measurable closure, learning, and leverage.

This is the honest way I would describe a startup using something like the SG MRT Exits MCP Server today: not fully AI-native yet, but meaningfully on the path.

Why? Because exposing tools to agents is an architectural choice that shows AI-native thinking. It treats AI as a first-class consumer of structured operational context. That matters. But if the agent still only recommends while people still decide, coordinate, and recover manually, then the business has not crossed the line.

What would make the MRT delivery company truly AI-native

To push the hypothetical MRT-based last-mile company across that threshold, several things would need to happen at the same time.

First, it would need loop closure. The agent must assign riders, choose exit strategies, and issue executable plans by default, with exceptions routed to humans rather than the reverse.

Second, it would need proprietary context layers beyond public data. Static MRT exit geometry is useful, but not enough. The company would need its own operational knowledge about which exits are faster in practice, which ones are frequently obstructed, which buildings are easiest to approach from which side, which exits are unsuitable for heavy parcels, and how station-to-door walking times differ by time of day.

Third, it would need a real data flywheel. If riders ignore a recommended exit because construction made it unusable, that signal should flow back into the system. If one route consistently misses SLA at 8:30 a.m. but performs well at 11:00 a.m., the platform should learn from that. If one building’s loading access is always delayed without a call-ahead, the planning logic should absorb that too.

Fourth, it would need organizational redesign. The question would no longer be, “How do we help dispatchers make better decisions?” It would become, “How do we define policy boundaries, exception protocols, and model performance metrics so dispatch scales without adding linear headcount?”

That is the difference between automation inside the workflow and redesign of the workflow itself.

And this is where I think a lot of AI conversations still miss the point. People often ask what model to use, what tool to integrate, or what agent framework to adopt. Those are valid questions, but they are downstream questions.

The upstream question is whether you are redesigning the company so that AI can take the shortest path from information to action.

The strategic test

When founders or executives say they want to become AI-native, I think there is one question they should ask themselves before anything else.

If AI were allowed to operate at full strength inside our business, what decisions would it actually own, what feedback would make it better, and which parts of our current organization would need to be redesigned because of that?

That question is strategic because it forces honesty about architecture, authority, data, org structure, and moat all at once.

And when building an AI-native product or solution, the domain-specific version of the same question becomes even sharper.

What is the shortest executable path from raw context to successful action in this domain, and have we designed both the technology stack and the operating model so AI can own that path by default, with humans handling only policy and exceptions?

For an MRT-based last-mile platform, that means asking whether the system can move from pickup to station choice to exit choice to handoff completion as one coherent AI-led chain, rather than as a collection of disconnected recommendations. For other industries, the path will look different, but the principle stays the same.

That, to me, is what AI-native actually means.

Not AI everywhere.

Not AI as branding.

Not AI as a feature added to old assumptions.

But a system, and an organization, rebuilt around the shortest path between knowing and doing.