Introducing my cofounder and my team

What building with AI really looks like, and why the definition of a company is changing faster than most people are ready for.

There is a moment in baseball and I have watched it happen enough times to know it by feel, when a manager walks out to the mound, not to pull his pitcher, but just to talk. To recalibrate. To remind the pitcher what they are actually good at, before the situation deteriorates further. The pitcher knows what to do. They have been trained. They have done it hundreds of times. They just needed someone to structure the moment and point them back to their strengths.

That is, more or less, what I did when I started building my AI team.

I am not talking about a team of full-time employees. I am talking about something new. A set of collaborators that do not sleep, do not negotiate salary, and do not need to be managed in the traditional sense. They need to be instructed. And the quality of that instruction determines everything.

This post is about that team. What it looks like. How it was built. Why it works. And honestly, what it still cannot do, because I have no interest in making this sound shinier than it is.

The company has changed. Not all companies. But enough.

Let me be precise here, because I think the sweeping declarations about AI replacing everything are both unhelpful and slightly dishonest. Not every industry is being reshaped at the same velocity. A restaurant, a law firm defending criminal clients, a physiotherapy clinic. These still depend on physical presence, human judgment, and trusted relationships in ways that no AI product is yet fully replacing.

But for founders, startup operators, product builders, and independent professionals working in knowledge, content, sales, systems, and digital services? The ground is shifting. Quickly. And the shift is not primarily about automation. It is about leverage.

The old model said: hire people who have the skills you lack. The new model says: build systems that extend your capability, and hire people who can operate and improve those systems alongside you. The new model does not eliminate people. It raises the bar for what kind of people and what kind of contribution is actually necessary.

In a startup with five people, every single person used to be responsible for a distinct function. Now, one person with well-structured AI workflows can cover the surface area that once required three. That is not a reason to lay people off. It is a reason to think very differently about what a role means, what a company needs, and who belongs on a founding team.

I have been thinking about this for longer than I have been willing to say publicly. And I have been quietly building. This post is me finally talking about what that building actually looks like.

Before I go deep on the tools and the technical architecture, let me introduce you to the team the way I would introduce a new hire cohort to a company. Not by job title. By function and contribution.

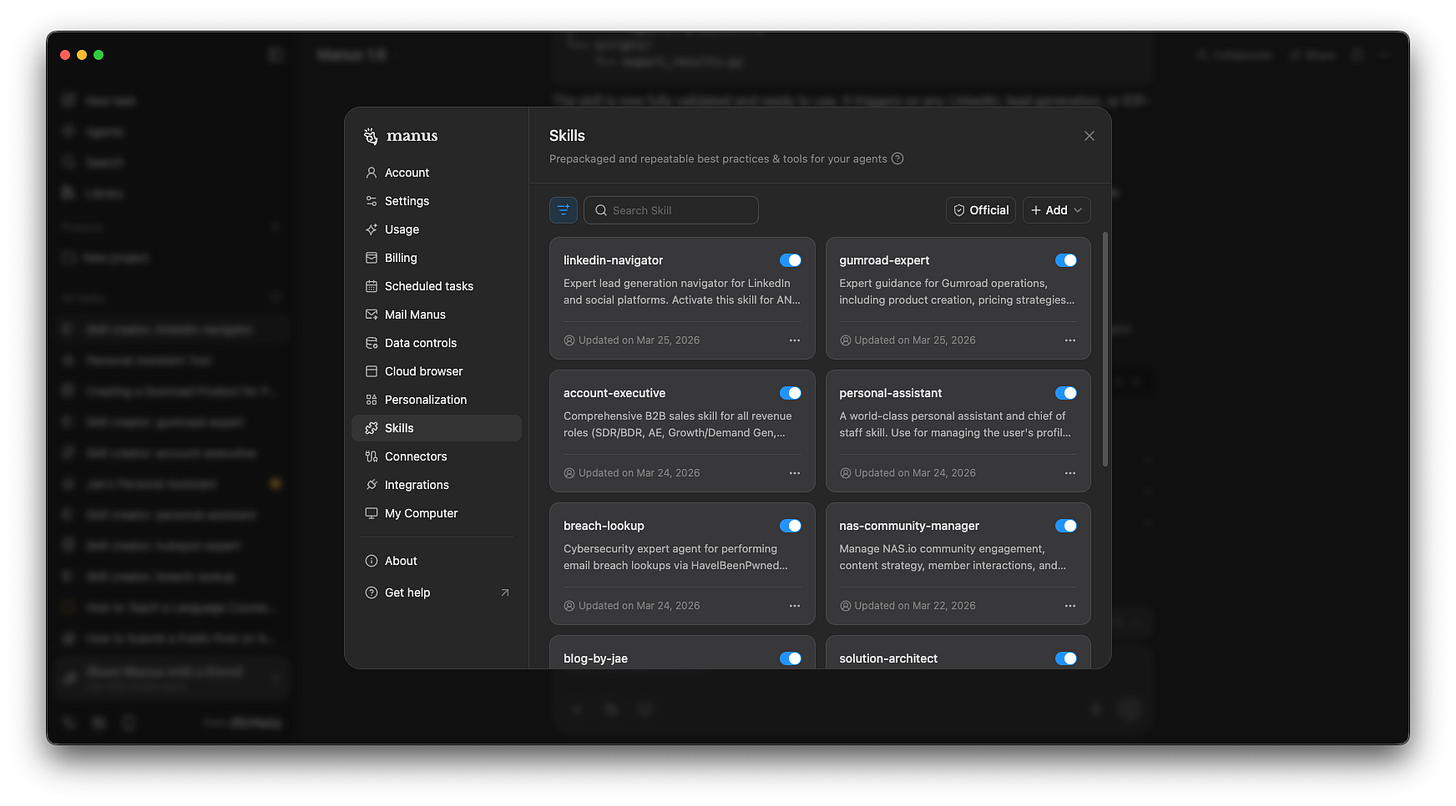

Across two environments, Manus AI and Claude AI, I have built a set of AI skills. Think of a skill not as a chatbot, but as a trained specialist. Each one has a defined mandate, a set of operating instructions, reference materials, and a specific workflow for how it does its job. Each one is built to do one thing well, repeatedly, without drifting.

Here is the roster at a glance:

Cofounder: My stress-testing partner. Challenges my ideas, pokes holes in my logic, rewrites weak problem statements, and helps me sharpen pitches and product hypotheses.

Personal Assistant: My chief of staff. Manages my profile, schedule, tasks, budget checks, and multi-skill orchestration.

LinkedIn Navigator: My lead generation and prospecting specialist. Runs ICP development, LinkedIn search strategy, buyer persona profiling, and SDR/BDR outreach copy.

Account Executive: My full-stack B2B revenue operator. Covers the entire sales cycle from cold outreach to closing, including Apollo.io workflows, MEDDPICC qualification, pipeline management, and founder-led sales.

Global HR & TA Specialist: My talent and people advisor. Supports resume evaluation, hiring strategy, talent acquisition frameworks, and job market guidance across global markets.

Gumroad Expert: My digital product and creator economy operator. Handles Gumroad setup, product positioning, pricing strategy, listing copy, and creator storefront growth.

Nas.io Community Manager: My community management operator for NAS.io communities. Manages engagement, content, and member support for that specific audience.

Social Media Manager: My content distribution operator. Plans, scripts, formats, and optimises content for social media channel, particularly short-form and platform-native formats.

Blog-by-Jae: My editorial voice. Writes long-form blog posts in my exact voice, tone, and storytelling structure. This post was written with its guidance.

Solution Architect: My technical design partner. Turns product ideas, system requirements, and app briefs into architecture diagrams, technical specs, and LLM-ready development instructions.

Odoo Expert: My ERP and operations specialist. Handles Odoo configuration, module selection, workflow design, and implementation guidance for business operations.

Breach Lookup: My security intelligence specialist. Handles credential breach monitoring and lookups to support data protection hygiene.

Twelve specialists. All available simultaneously. None of them require onboarding, performance reviews, or catering at the all-hands. What they do require, and this is the part that most people underestimate, is quality instruction.

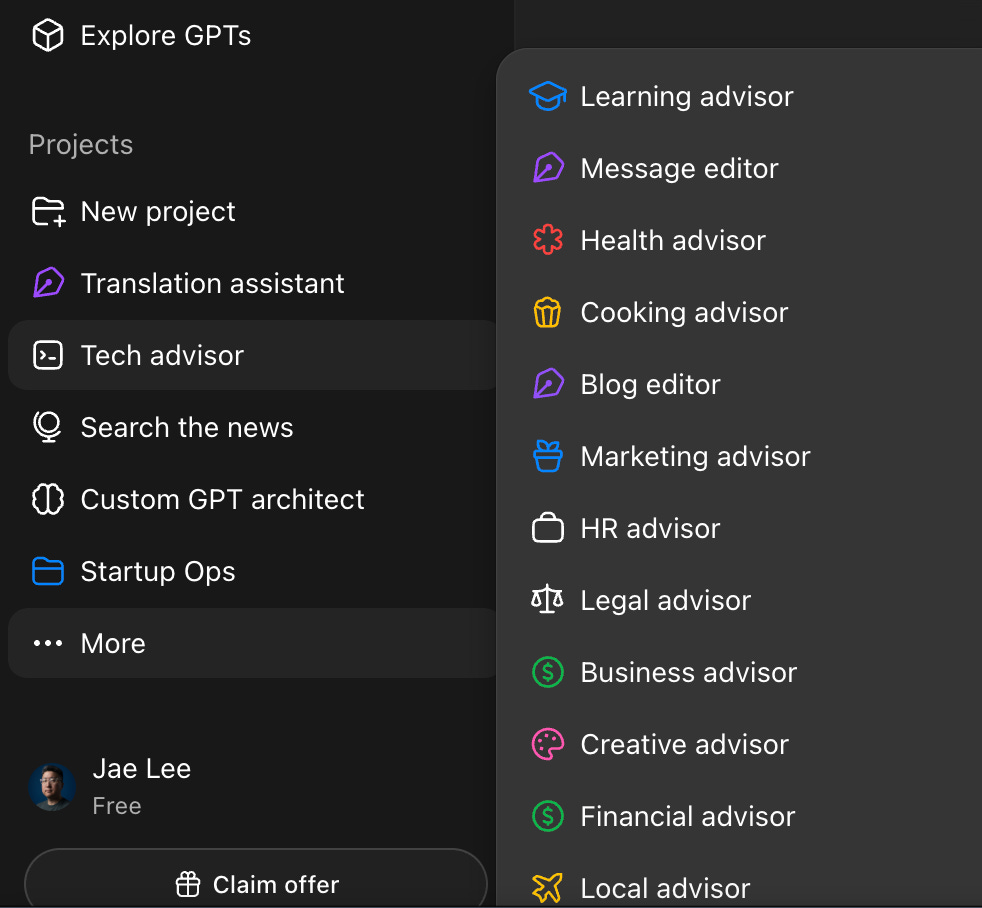

The simplest starting point: ChatGPT projects

Before we get into the more sophisticated infrastructure, let me start where most people start, and where I still operate for many things. ChatGPT Projects.

If you are new to this, here is the clearest analogy I can give you: a ChatGPT Project is like a dedicated notebook for a specific area of your work, with a standing briefing note taped to the inside cover. Every conversation that happens inside that notebook reads the briefing note first. So when you open the notebook and ask a question, the AI already knows who it is supposed to be and what context it is working inside.

That briefing note is called a system prompt. And it is one of the most underrated tools in practical AI usage today.

What is a system prompt, and why does it matter?

A system prompt is a set of instructions given to an AI before the conversation begins. It defines the role, the tone, the scope, the constraints, and the operating context. It is not a question. It is a mandate.

Think of it like a job description, an onboarding document, and a brand voice guide rolled into one paragraph. When it is written well, the AI behaves like a trained specialist. When it is vague or absent, the AI behaves like a talented generalist who is guessing what you need.

The difference between a useful AI interaction and a frustrating one is almost always the quality of the setup, not the capability of the model. Most people blame the model. The real issue is the instruction.

The beauty of GPT projects is simplicity. No code. No integration. Just a well-written system prompt and a consistent workspace. For quick advisory loops, drafting, and thinking out loud with context. Projects are fast and effective.

But they have a ceiling. And that ceiling is where Custom GPTs come in.

Custom GPTs: When you need more than a system prompt

If ChatGPT Projects are a notebook with a standing briefing note, then Custom GPTs are a specialist who has been trained, given reference documents, handed a set of tools, and told exactly how to handle specific types of requests.

The technical distinction matters, so let me be direct about it:

A ChatGPT Project is a persistent chat context with global instructions. A Custom GPT is a purpose-built AI application with grounded knowledge, specific capabilities, and optional third-party integrations.

A Custom GPT can be given uploaded documents that it references when answering, so it is not just guessing from general training data, it is drawing from your specific materials. It can be connected to external APIs. It has a configured conversation style, a defined scope, and a persona that is consistent across all users who interact with it. It is, in essence, a lightweight product, not just a chat thread.

The tradeoff: Custom GPTs take more time to build well, and their quality depends heavily on the design of their knowledge base and instructions. They are also more appropriate for repeatable, structured use cases than for open-ended advisory conversations.

Now here is the honest distinction between using a Custom GPT and using an agent for content generation: a Custom GPT gives you grounded, structured, reference-informed responses in a consistent format. It is excellent for repeatable deliverables; such as a resume review, a translated phrase, a trip itinerary, a technical spec. An agent, by contrast, can take multi-step autonomous action. It can search, retrieve, synthesise, and produce across a workflow, not just in a single response. They are different tools for different jobs. The mistake is treating them as interchangeable.

Agent skills on Manus and Claude

Now we get to the part of the stack that I find genuinely exciting — and that I also think is the most misunderstood.

Custom GPTs and ChatGPT Projects are conversational tools. They are excellent. But they are fundamentally reactive. You ask; they respond. The work of sequencing, deciding what to do next, navigating across systems, and executing a multi-step workflow that still falls to you.

Agent skills are different. A well-designed skill is not just a set of response instructions. It is an operating procedure. It describes the objective, the workflow steps, the inputs and outputs, the decision logic, the tools to use, and the acceptance criteria for a completed task. When you give a capable AI agent a well-written skill, you are not starting a conversation. You are delegating a workstream.

I currently run skills across two environments: Manus AI and Claude AI. Both have different strengths. Manus is my primary environment for agentic execution; browser automation, multi-step task completion, long-horizon workflows. Claude is my primary environment for deep reasoning, writing, and structured document work. The skills I build for each are tuned to those strengths.

What makes a skill different from a system prompt?

Think of a system prompt as a job description. It tells the AI who it is and how to behave. A skill is more like a standard operating procedure. It tells the AI what to do, in what order, with what tools, and how to verify that the work is done correctly. The best skills I have built include: the purpose and scope of the role, the specific workflow steps broken into phases, the inputs required and outputs expected, the tools and resources available, edge cases and error handling, and the quality bar for the final deliverable.

The difference in output quality between a well-structured skill and a loosely written one is not subtle. It is the difference between a reliable team member and someone who is talented but needs constant supervision.

Friction, limits, and what still needs humans

I would be doing you a disservice if I ended this post without being direct about where things break down. Because they do break down. And I think the people who pretend otherwise are selling something.

Browser automation is the one obvious gap right now. Agentic AI tools are capable of extraordinary things when working inside well-structured data environments; documents, APIs, databases, structured inputs. But the moment you ask an agent to navigate a real web interface, such as to scroll a page, handle a popup, interact with a dynamic JavaScript-rendered UI… the reliability drops noticeably. It works. Sometimes it works well. But it is not at the level where I can set it and forget it for browser-dependent workflows without expecting to check in.

This is not a criticism of any single product. It is a current state of the technology. And when it is resolved, when browser automation becomes as reliable as document processing, the agentic experience will take a significant leap. The wings are almost there. The flight is still a little wobbly.

Here is where I want to be honest about something that is harder to say cleanly.

Human contributors still matter. They matter a lot. But the bar for what constitutes real contribution has risen sharply, and I think pretending otherwise is a disservice to everyone involved.

The people I most want to work with now are not people who have impressive titles or long CVs. They are people who know their own knowledge precisely, who can tell me, specifically, what they are good at, what their process looks like, what outcomes they have produced, and where their thinking breaks down. That level of self-knowledge is rare. And it is now more valuable than general capability, because general capability is increasingly available from the tools.

What I want to avoid at all costs (in human collaborators, and in AI systems) is vagueness. The person who cannot articulate the objective. The person who cannot walk me through their process. The person who responds to ‘how would you approach this?’ with a list of soft skills and good intentions. Vagueness used to be acceptable because experience was hard to transfer. Now, vagueness is a liability. Because the ability to transfer knowledge, to articulate it clearly enough that a system, a junior, or an AI can act on it, is exactly what determines how much leverage you can create.

I am not trying to be harsh about this. I genuinely want to work with other founders and operators who have done the work of understanding themselves. Who have their own processes, their own frameworks, their own hard-won convictions about how things should be done. Even if those frameworks are unconventional. Even if they have not been widely validated. The specificity is the value. A person with a clear, structured, idiosyncratic view of how to do their job is infinitely more useful in an AI-augmented environment than a person with a vague but widely acceptable one.

Because structured, specific knowledge and articulated logically, with clear inputs, expected outputs, potential failure modes, and known constraints, is exactly what gets transformed into a working skill. And a working skill, given to a capable agent, becomes leverage.

The people who thrive in this new environment will not be the ones who were the most generally capable. They will be the ones who were the most clearly self-aware about what they actually know and how they actually work.

I started this post by talking about a baseball manager walking to the mound. The manager does not throw the pitches. The manager creates the conditions under which the pitcher can throw them well.

The company I am building does not look like the companies that came before it. It is leaner by design. It is more leveraged by intention. It requires me to be more self-aware, more structured, and more articulate about how I work than any previous stage of my career has demanded.

I would not have it any other way.

If any of this resonates, and if you are building something similar, thinking about how to structure your own AI stack, or just trying to figure out where the real leverage is in all of this… I would genuinely like to hear from you. The work is more interesting when the thinking is shared.