Memory is becoming the next operating layer

I remember first coming across the academic concepts and definitions of short-term and long-term memory around 2000, through my friend Junchol Park, whom I usually call JC.

JC is a domain expert in data science and neuroscience. During his undergraduate years, he introduced me to what he was learning through his coursework and lab experiments, especially around how different parts of the brain serve different functions related to memory.

Some parts helped us process immediate signals. Some parts helped us retain patterns. Some parts helped us coordinate movement, judgment, language, emotion, and memory. What stayed with me was not only the biology, but the architecture.

The brain was not one monolithic intelligence engine. It was a system of specialized modules, coordinated through signals, context, and repetition.

I remember one subway ride when I mentioned to JC that I could imagine a kind of digital brain, not as a metaphor only, but as a system and software layer where people could dump their short-term and long-term memories, then retrieve them on demand.

Intelligence without memory is not enough.

When OpenClaw first went viral, I started looking at it from a system architecture perspective. I wanted to understand how its components were organized, how it shaped the developer experience, and why it resonated so quickly with the community.

A fellow developer community leader and entrepreneur, Jason Kneen, was also actively posting on X/Twitter about OpenClaw and its architecture. His posts helped me speed up my own understanding. Sometimes the best way to learn is not just through documentation, but through the lens of another builder who is taking the time to explain what matters.

And earlier this year, I was also inspired by several developers talking more actively about persistent memory for AI agents. That brought the old idea of a digital brain back into a more practical frame. Not memory as a vague concept. Persistent memory as infrastructure.

Most AI agents today still behave like they have impressive short-term memory and fragile long-term memory. A context window can hold a conversation, a task, a document, or a decision for a limited period. But once the session ends, the agent often loses the thread unless the platform has its own memory layer or the user manually restates the context.

For casual use, this may be acceptable. For real work, it becomes expensive.

A founder does not want to re-explain the same product strategy every week. A consultant does not want to restate the same client constraints across tools. A developer does not want to remind every agent which architecture decisions were already made. A team does not want business knowledge scattered across prompts, folders, Slack threads, cloud drives, and disconnected AI sessions.

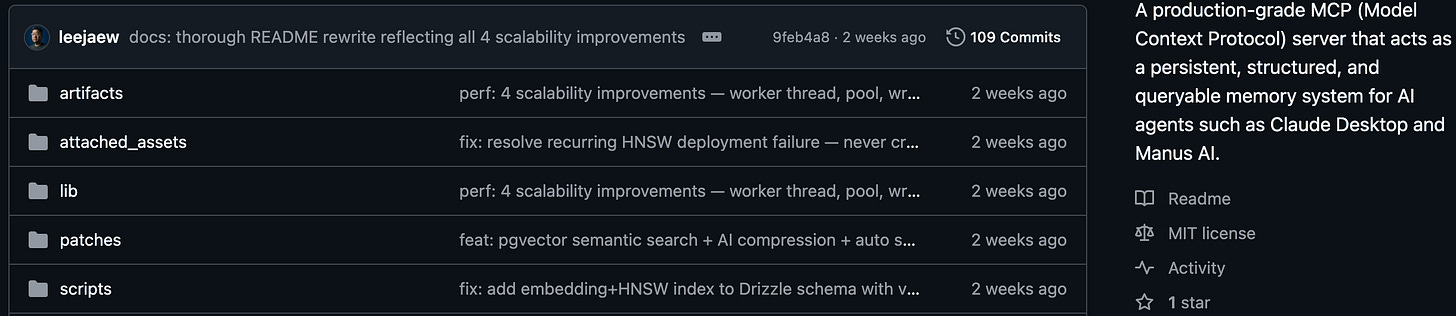

This is why I built Agent Memory Dump earlier this year.

Agent Memory Dump is an MCP memory server designed to act as a shared memory layer across multiple AI agents. It stores conversation turns, prompts, responses, tool calls, decisions, and code as structured observations in PostgreSQL. It groups those observations into sessions, tags them by agent and timestamp, and makes the memory available through MCP tools so different agents can read and write to the same store.

The important part was not simply saving text. The important part was making memory searchable, portable, and reusable.

The system uses hybrid search, combining full-text search, vector similarity through pgvector, and recency weighting. It also creates session summaries so long histories can remain useful without flooding the context window. In practical terms, this means an agent can retrieve relevant past work even when the wording has changed, even when the memory is old, and even when the full history would be too large to load.

That solved one problem I was feeling personally: my agents needed a shared memory dump.

If I moved between Claude Desktop, Manus AI, and OpenClaw, I did not want each one to behave as if it had never met me before. The memory layer needed to sit outside the agent, not inside a single platform.

That was the first pattern.

Memory should not belong to one interface.

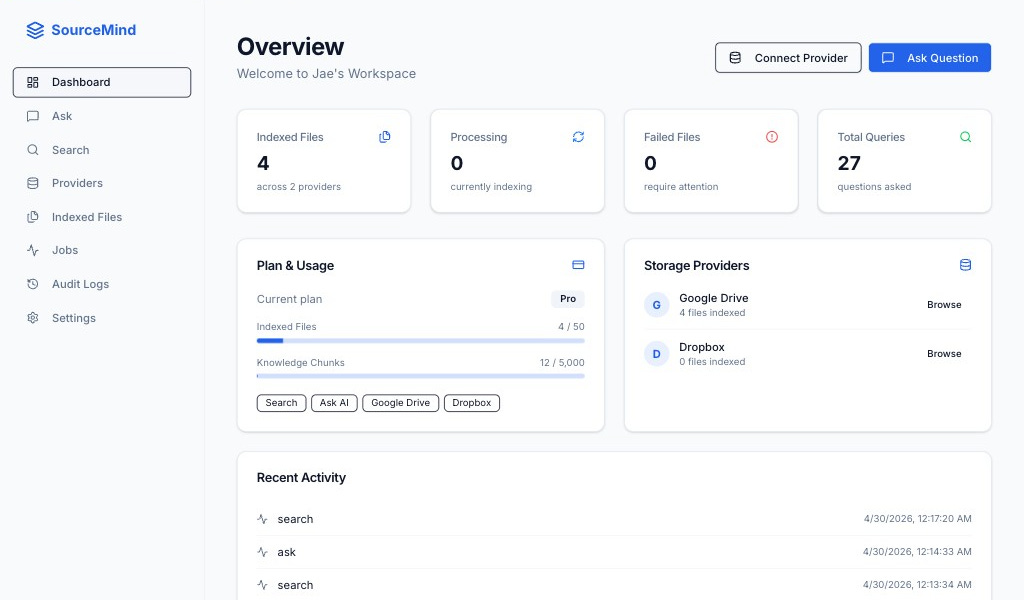

After the Easter holiday, I started building and testing a new project called SourceMind.

At first glance, SourceMind may sound like another AI search tool for cloud storage. But for me, it came from a very specific realization.

Agent memory is not enough if the underlying reference sources are still fragmented.

It is useful for an agent to remember what I instructed it to do. It is useful for an agent to remember the responses it generated. It is useful for an agent to recall decisions, summaries, and past workflows.

But much of our actual work does not live inside the agent conversation.

It lives in PDFs, pitch decks, spreadsheets, contracts, strategy notes, CSV exports, JSON files, client folders, Dropbox archives, Google Drive structures, and documents created years before the AI agent entered the workflow.

So I needed a second layer.

SourceMind is an AI intelligence layer designed to unify and interrogate data across fragmented cloud storage ecosystems. It connects to Google Drive and Dropbox, lets users browse folders and select files or entire folders for indexing, then processes those files through an ingestion pipeline. PDFs, text files, CSVs, and JSON files are chunked, embedded, stored with pgvector and full-text search vectors, and made searchable through hybrid retrieval.

The user can then ask natural-language questions and receive cited, RAG-powered answers linked back to the original documents. That citation layer matters.

A generic agent response may sound confident, but confidence is not the same as traceability. If an answer is based on a source file, I want to know which file, which passage, and which context shaped the response. For serious work, especially in consulting, enterprise transformation, finance, education, hospitality, logistics, or regulated environments, traceability is not optional.

SourceMind also handles the operational layer around this problem. It supports OAuth connections to cloud storage, encrypted tokens at rest, background indexing jobs with status and retry logic, audit logs, and administrative visibility. These may not be the glamorous parts of AI, but they are the parts that make the system usable beyond a demo.

This is also why SourceMind is different from simply activating a Google Drive connector inside one AI agent.

A connector gives one agent access to one storage environment within that agent’s workflow. That can be convenient. But convenience is not architecture.

SourceMind is built as a centralized intelligence layer. It is designed to sit across storage providers, selected folders, indexed files, search methods, citations, and audit trails. It can become a shared retrieval foundation for multiple agents, workflows, and business use cases. Instead of asking one AI tool to temporarily inspect a drive, SourceMind prepares the knowledge layer so agents can operate on a more reliable foundation.

That distinction matters because AI augmentation is not only about prompting better. It is about preparing the system around the prompt.

When I think back to that early conversation with Junchol, I realize why the brain remains such a useful metaphor.

The brain does not treat intelligence as a single function. It has different areas and systems that influence memory, movement, judgment, emotion, perception, coordination, and action. These areas do not operate in isolation. They interact constantly. They receive signals, interpret context, strengthen pathways, and help us respond to the world.

In system architecture terms, you could call them modules or components. In product terms, you could call them layers of experience.

The AI platforms and agentic user experiences we are building today are beginning to mirror that structure. We have model layers, memory layers, tool layers, retrieval layers, orchestration layers, interface layers, permissions layers, and audit layers. Each one serves a different purpose. Each one changes what the user can trust the system to do.

A model without tools can speak, but it cannot act very far.

A model without memory can assist, but it cannot build continuity.

A model without retrieval can reason from its training, but it cannot reliably use the user’s current operating reality.

A model without auditability may be impressive, but it becomes difficult to deploy in serious environments.

This is why I believe the next wave of AI-native businesses will not be built by simply attaching an LLM-powered chatbot to existing workflows.

They will be built by preparing the data, memory, retrieval, and orchestration layers around the business itself.

Whether the industry is hardware manufacturing, fintech, edutech, hospitality, logistics, professional services, or any other vertical, the challenge is similar.

Most organizations want AI augmentation, but their knowledge is scattered. Their files are fragmented. Their decisions are undocumented. Their workflows depend on tacit knowledge. Their data lives in different platforms, owned by different teams, with different levels of freshness and quality.

Then they ask an AI system to behave intelligently.

But intelligence depends on what the system can see, retrieve, remember, and verify.

This is where founders and operators need to become more disciplined. The work is not just choosing the latest model or adding another AI feature. The work is preparing the organization so AI has something coherent to augment.

That means building reference sources. It means deciding what should become persistent memory. It means designing how agents access files, how answers are cited, how decisions are stored, how knowledge is updated, and how different systems share context.

The companies that do this well will move differently. Their AI systems will not only answer questions. They will build continuity. Their agents will not only execute tasks. They will understand prior decisions. Their teams will not only generate content. They will compound institutional learning. This is the path from AI-augmented to AI-native.

A founder question I keep returning to is not just what we should build with AI, but what our organizations need to remember. I think the better starting question is simpler.

What does your organization need to remember?

Our brains are not powerful because they remember everything perfectly. They are powerful because they organize signals into patterns that help us act.

That is what I learned again while building Agent Memory Dump and SourceMind.

Memory is not a feature.

Memory is becoming infrastructure.