Get skills, not prompts.

Why the way you instruct your AI agent matters more than the model you choose

In my previous post, I introduced my co-founder and what I loosely call “my team”: a growing ensemble of AI agents that I’ve been building, training, and deploying across my work. I talked about the agent stack, briefly touched on skills like my Odoo expert, and hinted at the direction I was heading. This is the follow-up I promised. And it starts with a gym session.

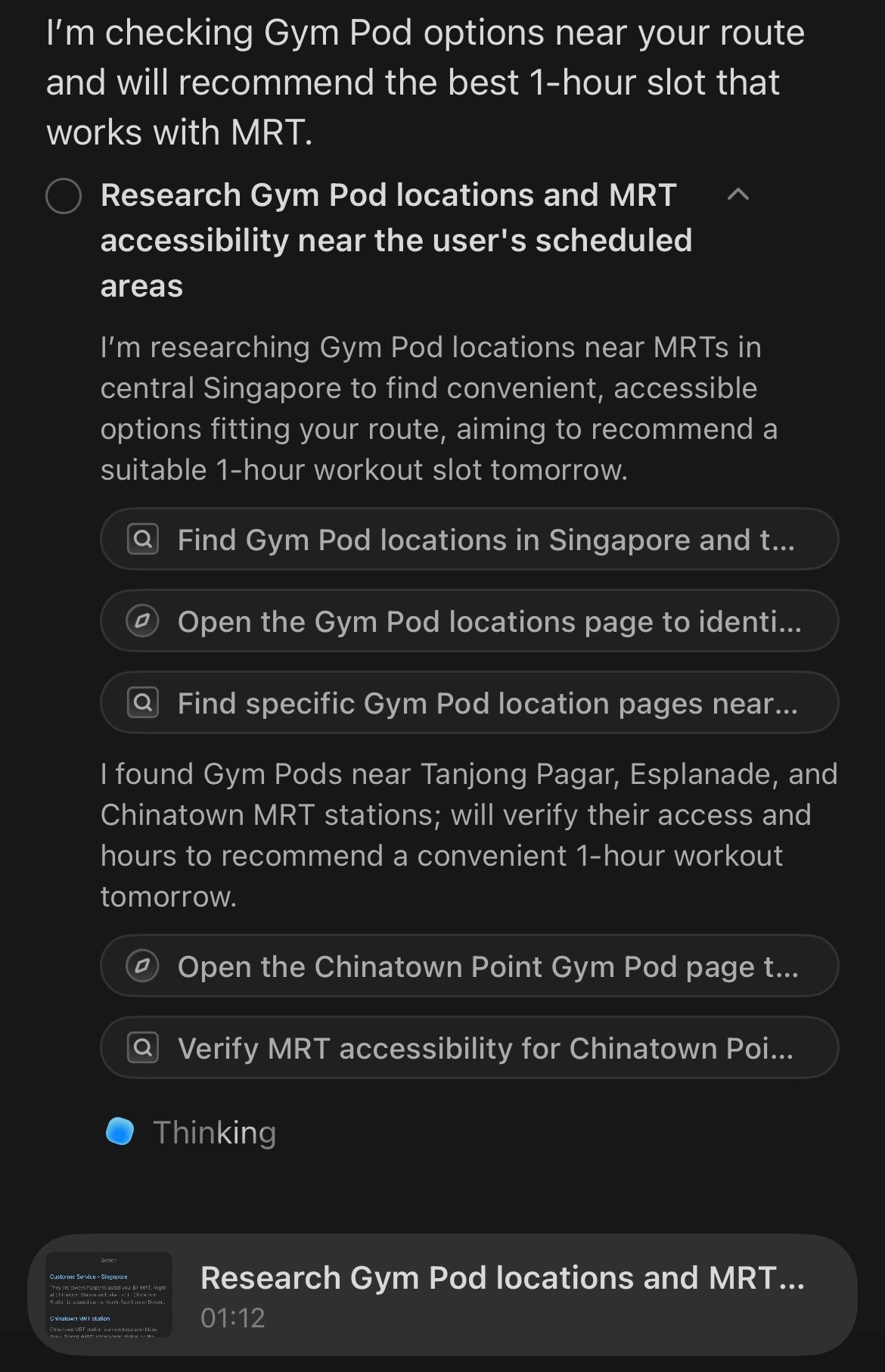

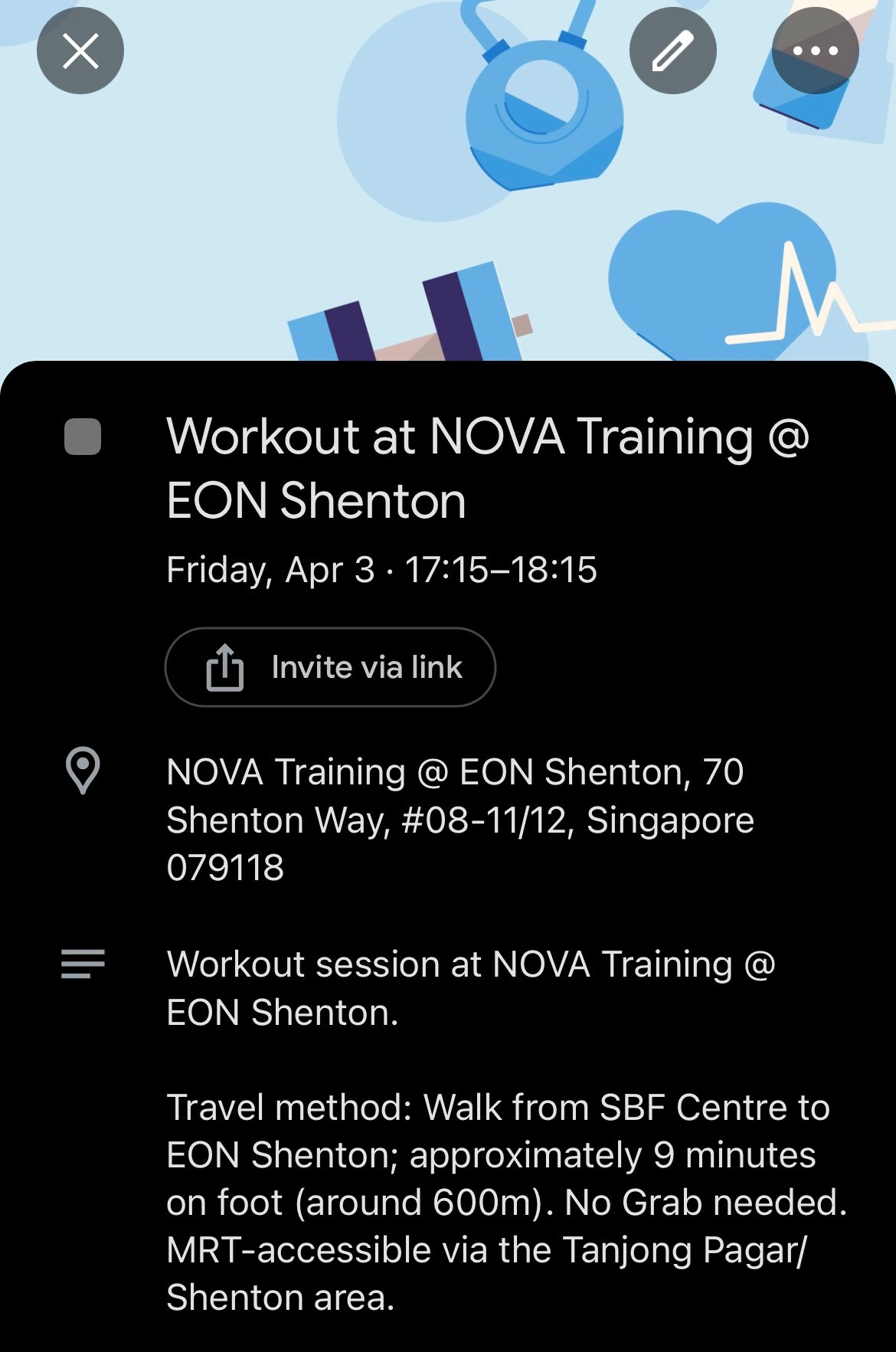

A few days ago, I gave my personal assistant agent a straightforward instruction:

“Find a 1 hour workout time slot at a Gym Pod location for tomorrow that fits my schedule. Prioritize gym locations accessible by MRT. I prefer not to take a Grab taxi.”

That’s it. Single instruction. No follow-up. No clarification needed.

Now, if I had asked my agent something simpler, like “Summarize my calendar for tomorrow” or “Add a meeting at 3pm,” I’d honestly tell you: just open the calendar app. It would be faster. It would be cheaper. You don’t need an AI agent to read your own schedule back to you.

But this wasn’t that kind of request.

To fulfill what I asked, my agent had to do something a calendar app never could. It had to pull my calendar events for the next day, identify the fixed commitments and their locations, calculate the open windows between them, look up Gym Pod locations across Singapore, filter for ones accessible by MRT from where I’d already be during those open windows, cross-reference travel times, and find a slot where a one-hour workout, plus transit, actually fit without blowing up the rest of my day.

That’s not a prompt response. That’s a workflow.

And the difference between those two things is exactly what this post is about.

The prompt trap

There’s a pattern I see constantly. Someone discovers ChatGPT or Claude, gets excited, and starts collecting prompts. They download prompt packs from LinkedIn. They screenshot “Top 10 Prompts for Productivity.” They paste in a paragraph and hope for magic.

I get it. The first time an LLM writes a decent email for you or summarizes a document in three seconds, it feels like the future just arrived at your desk. But here’s the thing: that’s inference. You gave the model an input, it gave you an output. That’s powerful, sure. But it’s also just the surface.

What most people don’t realize is that the real unlock isn’t in the quality of a single prompt. It’s in how you structure the knowledge, context, and workflow logic that sits behind the prompt. It’s in what the AI industry calls skills.

And skills are fundamentally different from prompts.

What a skill actually is

On platforms like Anthropic’s Claude and Manus AI, a skill is a structured, reusable instruction set that tells an agent how to think about a domain, not just how to respond to one question. Think of it as the difference between handing someone a script for one phone call versus training them for the job.

A prompt says: “Write me a professional email declining this meeting.”

A skill says: “You are a personal assistant. You have access to my calendar, my contact list, and my location history. You understand my scheduling preferences, my transit constraints, and my priority framework. When I ask you to handle scheduling, you don’t just look at open time. You consider travel time, energy management, the nature of adjacent commitments, and my stated preferences about transportation. You confirm before booking. You flag conflicts. You learn from corrections.”

See the difference?

A skill encodes domain knowledge, behavioral rules, workflow sequences, tool access permissions, and contextual understanding into a persistent framework that the agent can draw on every time it’s activated. It doesn’t disappear after one exchange. It compounds.

The technical structure varies by platform, but the principle is consistent. On Claude, skills are bundled as instruction sets with references, tool configurations, and behavioral directives. On Manus AI, the architecture is similar: skills carry identity context, domain expertise, decision-making logic, and integration hooks. In both cases, the skill acts as an operating manual for the agent within a specific domain.

This is why my personal assistant agent could handle the gym request without a ten-paragraph prompt from me. The skill already carried the context: who I am, where I live, how I move around Singapore, what tools it has access to (calendar, maps, location data), and what “fits my schedule” actually means in practice. I didn’t need to explain any of that in the moment. The skill had already been built.

The first takeaway: Task design is the real skill

Here’s what I want people to understand, especially founders and operators: the magic isn’t in the model. It’s in how you define and distribute tasks so that an agent can execute through a genuine agentic workflow.

When I asked my agent to find a gym slot, I wasn’t asking it to think for me. I was asking it to act for me, to chain together a sequence of subtasks that would have taken me fifteen to twenty minutes of app-switching, map-checking, and calendar-squinting. The agent understood the fixed variables (my schedule, event locations), applied the constraints I care about (MRT access, no Grab), and worked through the problem step by step.

If you only use AI to generate text responses (summarize, rephrase, draft) you’re using about ten percent of what’s available to you right now. That’s not a criticism. Inference is valuable. But it’s not the same as transforming your workflow into an agentic environment where the AI doesn’t just respond but operates.

The shift is this: stop thinking of AI as a tool you query. Start thinking of it as a teammate you brief.

And like any good teammate, the quality of the briefing determines the quality of the output. But unlike a prompt you type fresh every time, a skill means you only have to brief once. After that, the agent carries the playbook.

From personal to enterprise: The Odoo story

Let me take this from personal productivity into business operations, because that’s where the implications get serious.

In my previous post, I briefly mentioned that my agents have Odoo expert skills. As an official Odoo certified consultant and Learning Partner, I’ve spent enough time inside the platform to know both its power and its complexity. Let me unpack what that combination of domain experience and agent skills actually means, and what it made possible.

Recently, I developed a custom MCP (Model Context Protocol) server that allows my agents to connect directly with Odoo ERP systems. For those unfamiliar, Odoo is a comprehensive open-source ERP platform that handles everything from CRM and accounting to inventory, HR, project management, and more. MCP is the protocol layer that lets AI agents communicate with external tools and data sources in a structured way. Think of it as the plumbing that connects the agent’s brain to the systems it needs to operate in.

Building that MCP server took me two hours. And I want to be specific about what “building an MCP server” actually means, because the scope matters.

The core development alone included writing a full Odoo XML-RPC client with authentication, session handling, and error mapping. It meant defining 16 distinct tool definitions, each with proper input schemas and validation, so the agent knows exactly what operations are available and how to call them correctly. On top of that: a JSON-RPC 2.0 handler covering the full protocol lifecycle (initialize, tools/list, tools/call), a FastAPI server supporting both Streamable HTTP and SSE transports, a STDIO transport with an entry point that handles transport switching, and config/environment loading to make the whole thing portable across different Odoo instances.

Then there’s testing and debugging, deployment and infrastructure, and documentation. In total, this is not a weekend hack or a quick script. A senior developer, someone comfortable with XML-RPC, JSON-RPC, FastAPI, and the MCP specification, would realistically need 32 to 48 hours to ship all of that to production quality. That’s a full workweek, minimum.

I did it in two hours. Not because I’m faster than a senior developer. Because my agent, equipped with the right skills and the right context about what I was building, handled the heavy lifting while I focused on the architectural decisions and the parts that required my judgment.

Two hours to design, develop, test, and deploy a fully functional bridge between my AI agents and any Odoo environment. That server can now handle any task that Odoo’s APIs expose: creating records, reading data, updating workflows, triggering automations. The full scope.

But the MCP server alone isn’t what made the next part possible. What made it possible was the skill layer sitting on top.

My agents carry Odoo expert skills, built from official Odoo documentation, functional references, and workflow logic that I’ve programmed based on years of working with ERP systems. The skill doesn’t just know what Odoo is. It understands how Odoo modules relate to each other, how data flows between apps, what a properly configured sales pipeline looks like, how inventory valuation affects accounting entries, and what steps are required to set up a functional instance from scratch.

So when I decided to put together a demo Odoo environment for a football academy (a soccer training organization with players, coaches, schedules, memberships, and equipment) I didn’t sit down and click through Odoo’s interface for three days. I gave my agent the business context and said, essentially: “Here’s what this organization does. Set it up.”

The agent installed the appropriate Odoo apps and modules. It created the organizational structure. It populated the system with context-relevant, branded demo data: player profiles, coaching staff, training schedules, membership tiers, inventory for equipment and kits. The works. The entire setup, from a blank Odoo instance to a fully populated, functional demo environment, took two hours.

There was one hiccup. Midway through, the agent created some duplicate player records. But here’s the thing: because the skill included self-correction logic (something I built into the workflow instructions from the beginning), the agent caught the error, identified the duplicates, cleaned them up, and continued. No intervention from me. No panicked Slack message. It just handled it.

Now, let me put that in perspective. I’ve worked with ERP systems across my career: as a certified Odoo consultant, as an engineering leader integrating enterprise platforms for clients like UPS, Samsung, and Hyundai, and as a founder building products on top of complex data architectures. I know what this kind of work costs.

A human ERP consultant, someone with functional certification and pre-sales or technical consulting experience, would need a minimum of 24 to 32 dedicated hours to achieve the same result. That’s three to four full workdays in theory. In practice, factoring in context-switching, client communication, revision cycles, and the inevitable “let me check the documentation” moments, you’re looking at more. Probably a full week or more of billable time.

My agent did it in two hours. Not because the agent is smarter than an experienced consultant. But because the agent had the right skill, the right tool access, and the right workflow architecture to execute without friction.

The architecture that makes this work

Let me break down the stack so this isn’t abstract.

At the base, you have the AI model. Claude, GPT, or whatever foundation model you’re working with. This is the reasoning engine. It’s powerful, but on its own, it’s like having a brilliant analyst locked in a room with no phone, no computer, and no files.

Next, you have tools and integrations: MCP servers, API connections, browser access, calendar hooks, database connectors. These are the hands and eyes. They let the agent interact with the real world: read your calendar, query a database, navigate a web interface, create records in an ERP.

Then you have skills: the structured instruction sets that tell the agent how to operate within a domain. This is the training, the playbook, the institutional knowledge. A skill carries the why and how, not just the what.

And finally, you have context: the specific information about your situation, your business, your preferences, your constraints. Context is what makes the same skill produce different (and correct) outputs for different users or scenarios.

When all four layers work together (model, tools, skills, context) you get genuine agentic behavior. The agent doesn’t just answer questions. It plans, executes, self-corrects, and delivers outcomes.

Strip away any one of those layers, and the experience degrades. A model without tools can only talk. Tools without skills produce random actions. Skills without context produce generic outputs. And context without a model is just a database.

This is why downloading a prompt from someone’s LinkedIn post and pasting it into ChatGPT will never replicate what a properly skilled agent can do. The prompt is one layer. The system needs four.

The mindset shift

I want to close with something that I think matters more than any technical architecture.

Agentic workflows are powerful when you’ve done the homework. When you’ve assessed your existing workflows, defined your tasks clearly, established what “done” looks like, and understood where an agent can genuinely add leverage versus where it’s just adding complexity.

But asking an agent to save you from what you don’t know? That’s not leveraging AI. That’s outsourcing your thinking, and no model, no matter how good, will consistently save you from that.

I believe the better starting point is this: skill up. Be open to learning. Be willing to pivot from the way you’ve always done things. That’s not easy. I understand the anxiety around AI replacing jobs, disrupting industries, changing the rules of the game mid-season. But fear-driven inaction is a worse strategy than imperfect experimentation.

If you’re a founder, an operator, a builder, an aspiring entrepreneur: start by understanding your own workflows deeply enough to teach them to an agent. That exercise alone will make you better at your job, even before the agent does anything. And when you do hand it off, you’ll be handing off a well-defined play, not a vague hope.

Perhaps the quality of a response generated by Claude versus ChatGPT on pure inference shouldn’t be your true north star. The real question isn’t which model gives a better answer to a single prompt. The real question is: which system, with model, tools, skills, and context working together, can reliably execute the workflows that actually move your work forward?

That’s where the game is heading. And the players who understand the playbook will have a serious advantage.